The Nokia N900

I got a Nokia N900 for Christmas. No, to be more accurate, I got a Nokia N900 after Christmas. We had some friends who were spending Christmas in the States, so I ordered one off of Amazon and had it shipped to their home. It was a full $150 cheaper than it would have been here. The bummer was having to wait until they got back to Beirut to open it.

So, I’ve been using it for the last few weeks and there are some seriously cool things, as well as some things that are less cool.

First off, the good:

- Root access

- Native X Windows, so most programs run on it without having to modify the source

- A very sensitive touch screen

- Nice effects

- SSH server and client

- An 800×480 display crammed into 3.5″

- Community-run repositories so I can roll and publish my own packages

- And finally (just in case I forgot), Root access

But there is some bad:

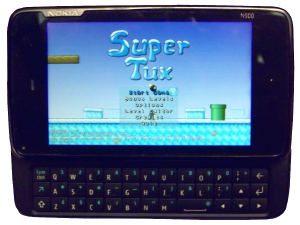

- OpenGL ES 2.0: One of the first things I wanted to do with this was play BZFlag on it. Nope, it’s not in the repositories and can’t be compiled because it requires glBitmap(), glRasterPos3f() and various other functions not available in either OpenGL ES 2.0 or 1.1. I understand the motivation between ES, but surely there is some value in a OpenGL -> ES wrapper. I’ve found one here, but it doesn’t implement the above functions. I’m hoping to do some stuff with this, but I’m no OpenGL expert. So for now I’m stuck playing Supertux (in non-OpenGL mode).

- Ovi Maps: Yeah, I got all excited about Ovi Maps 3.0.3 which is supposed to be super cool with voice and turn-by-turn directions, but I’m not able to upgrade from 1.0.1. Apparently the N900 was left out of the first batch of smartphones to get the updates. Having said that, 1.0.1’s Lebanon maps are incredibly useless, so unless 3.0.3 includes better maps, it will all be pointless anyway.

- Skype: There’s no video. There’s even a second (crappy) camera on the phone that points towards the user, but video just isn’t available. Unfortunately, I don’t have my family on GoogleTalk yet, so I haven’t been able to test whether that’s any different.

Despite the above flaws, I am extremely happy with this phone. I can hack on it without having to jailbreak it. What more can you ask for? Aside from a reasonable data plan from MTC Touch (my local cell phone service provider)…